The denial environment is no longer paced by human review. Large payers and their vendors now use software-assisted utilization review, predictive recovery models, automated claim edits, and peer-benchmarking logic to decide which claims are delayed, reduced, or rejected. For a small or mid-sized practice, that changes the economics of appeals immediately: the payer is operating at batch speed while the provider is still staffed for one-chart-at-a-time rebuttals.

That mismatch is why the old template-first workflow breaks down. A billing team can still win individual cases, but the queue compounds faster than humans can draft. Every 20- to 45-minute appeal adds to a backlog that favors the party with automation.

If denials are industrialized, appeals cannot stay artisanal.

How insurers are using AI to deny claims

The public examples that keep surfacing all point in the same direction: insurer review is becoming more systematized, more standardized, and less dependent on deep chart-by-chart analysis.

Cigna and PXDX

Coverage of the PXDX litigation describes a review process capable of batch-scale denials, with handling times measured in seconds and plaintiffs alleging inadequate individualized review.

Humana and nH Predict

Litigation over Humana's use of nH Predict alleges that post-acute care decisions were driven by predicted discharge timelines that overrode the treating clinician's real-world judgment.

EviCore and outsourced utilization control

ProPublica's reporting on EviCore describes a large outsourced review infrastructure that insurers use to manage cost and utilization at scale. That matters because the economic pressure is not only clinical; it is operational and volume-based.

Automated downcoding and peer comparison

Denial is obvious. Downcoding is often harder to spot. Reporting on physician reimbursement fights, including the NBC News story behind the "guilty until proven innocent" framing, describes insurers and vendors using software-driven edits to downgrade claims without the kind of chart-level context clinicians expect. KZA and MGMA describe the same trend from the practice side: claims are increasingly screened against structured data, patterns, and benchmarks before anyone meaningfully engages the note.

For many practices, this is the more corrosive problem. An outright denial gets attention. A reduced payment can slip into the revenue cycle as if it were a normal adjudication event.

| Traditional manual review | Automated downcoding |

|---|---|

| Periodic audit, manual chart request, human correspondence. | Claim data analyzed in real time by rules engines, benchmarks, or machine-learning models. |

| Focus on documentation sufficiency under CPT guidance. | Focus on outlier detection, peer comparison, or predicted coding norms. |

| Often produces an audit or record request. | Often produces immediate service-level reduction and revenue leakage. |

The human toll of AI prior authorization denials

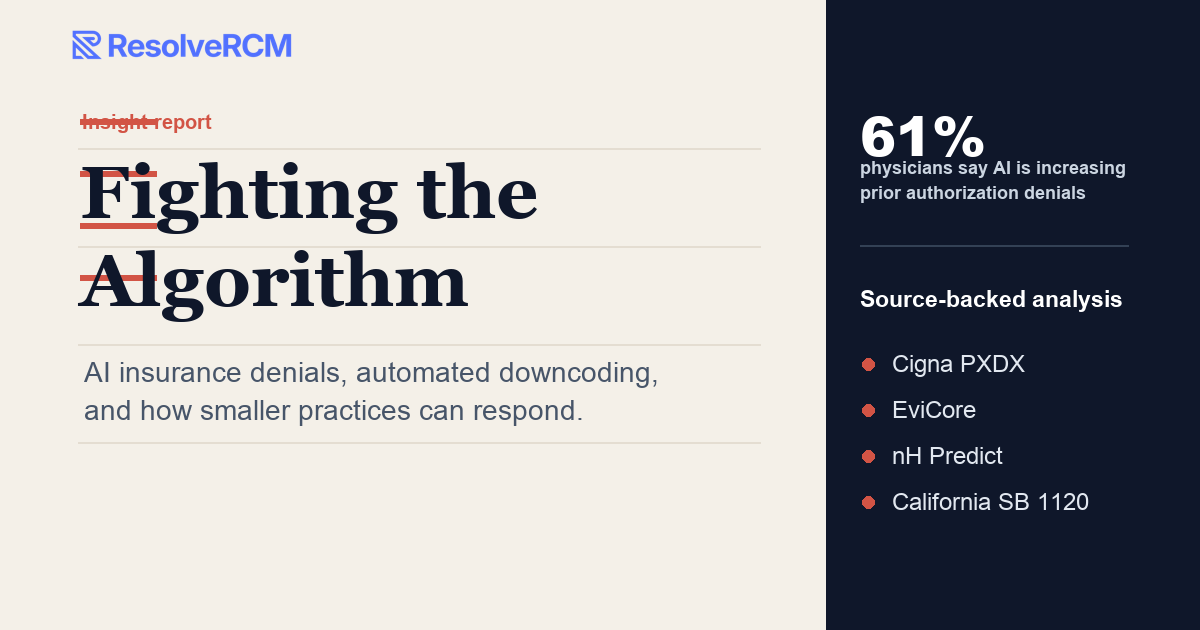

The human cost shows up in both patient access and staff exhaustion. In its February 24, 2025 release, the AMA said 61% of physicians are concerned that payer use of AI is increasing prior authorization denials. The same release reported that physicians average 39 prior authorizations per week, that the work consumes 13 hours of physician and staff time weekly, and that 89% say prior authorization contributes to burnout.

- Appeals become a throughput problem, not just a writing problem.

- Teams lose time to assembling rationale that should already be structured in the first response.

- MGMA advises practices to watch remittance advice for repeat adjustment language and payer-specific downcoding patterns that may reflect AI-driven mismanagement.

California SB 1120 and the regulatory shift

California's SB 1120 took effect on January 1, 2025 and prohibits AI or similar software from making the final medical-necessity decision to deny, delay, or modify care. Summaries from Word & Brown and the bill text itself point to the same practical rule: medical professionals, not algorithms, must make final medical-necessity determinations.

At the federal level, CMS's WISeR model explicitly uses enhanced technologies together with human review in Original Medicare. That model has already drawn criticism from hospital and physician groups. FAH's September 4, 2025 comments warned that the structure can create denial-oriented incentives and added administrative burden if it is not constrained with stronger safeguards.

How ResolveRCM helps practices respond to AI denials

ResolveRCM is not a generic template library. It is a workflow to help practices answer automation with their own structured response layer.

- Appeal letters with usable clinical logic. Generate denial-specific narratives grounded in payer policy, CPT framing, and the note you actually have.

- Medical necessity and MDM support. Turn fragmented chart facts into a defensible case for complexity, risk, and level-of-service integrity.

- Downcoding response. Spot the common structure of automated reductions and assemble a cleaner rebuttal faster than manual drafting allows.

- PHI-safe workflow. Protected health information stays local in the browser and is not transmitted to ResolveRCM servers.

Audio transcript

This is a lightly edited automated transcript generated from the current audio file. It has been cleaned for obvious product names, payer names, and readability, but it may still contain minor transcription errors.

Open full transcript

The scene that plays out, gosh, thousands of times a day, right? In every city, every town in this country, you know, that sound, that distinctive crinkle of the sanitary paper when you shift your weight on an exam table? Oh, yeah. Everyone knows that. Right. And you are sitting there. Maybe your legs are dangling a bit, feeling that very specific mix of vulnerability and hope. And the doctor is standing right in front of you, a human being who spent, what, a decade in medical school and residency?

At least a decade, yeah. And they are listening to your heart. They're looking at that mole that's been worrying you. They are making a decision right there in the room about what needs to happen to, well, to save your life or fix your pain. It's really the fundamental social contract of medicine, isn't it? Exactly. You trust the doctor and the doctor treats you. That is the baseline expectation we all have when we walk into a clinic.

That is the contract. But here's the twist. And this is what we really need to sit with today in this deep dive: while that intimate human interaction is happening, there's actually a third party in the room. An invisible one. Yes. You can't see them. They're hundreds of miles away, likely sitting in a server farm kept at a cool 65 degrees. Right. It is a computer program. And while your doctor is saying, yes, let's treat this, that program is scanning a code and in literally 1.2 seconds, it decides no.

And let's be very clear right up front that no, isn't just some glitch in the matrix. It is a calculated profit driven rejection. Yeah. It is a business model disguised as a technological efficiency. And that is the premise of our deep dive today. We are looking at this invisible war, a true David versus Goliath battle that is currently raging inside the American healthcare system. Really is.

On one side, you have independent doctors and patients just trying to get care. And on the other side, massive insurance algorithms and AI designed to say no as fast and as cheaply as possible. It's a battle of attrition, and the weapon of choice for these insurance giants is something called downcoding or increasingly just flat out algorithmic denial. It's a system where, as one of our sources put it, the doctor is essentially guilty until proven innocent, guilty until proven innocent.

I want to pause on that because it feels so contrary to how we think justice or even just normal business should work. It completely flips the dynamic. That phrase comes straight from a Dr. Terry Wagner in Ohio, right? That's right. This comes from an NBC News report we analyzed for this. Dr. Wagner runs a family practice. He's been doing this for 28 years. A long time. Yeah. He's the kind of doctor who knows his patients' names, he knows their kids' names.

And he started noticing something really weird happening with his billing. Set the scene for us. What exactly was happening to him? Well, so he would see a patient perform a complex exam that's called a Level 4 visit in medical billing terms and send the bill to Aetna. Okay. And a Level 4 visit, that's not just a standard checkup, right? No, not at all. A Level 4 isn't just a, hello, here's a prescription, goodbye.

It involves detailed decision making. So maybe you're managing multiple chronic conditions simultaneously like diabetes and hypertension. So this is real work. This is the heavy lifting of primary care. Exactly. Real medical judgment. So Dr. Wagner bills for that Level 4, which usually reimburses around $170. Okay. But then the payment comes back and Aetna has unilaterally changed it to a Level 3.

They pay him $125 instead. Wait, so they just, they just changed the bill without asking him. Yep. Without seeing the patient. Without asking for his notes, without looking at the patient's chart. They just decided based entirely on their algorithm that he shouldn't have billed a Level 4. Wow. They downcoded him. And here is the kicker. Dr. Wagner says, it's not like they came back and said, hey, we need more info.

They just pay the lower amount. It feels like if I went to a steakhouse, right, I order a ribeye, I eat the ribeye, and then I tell the waiter, well, my personal algorithm says this was a salad. Huh. That is a perfect analogy. Yeah. And the waiter, in this case, the doctor is too busy waiting on other tables to stop and argue with you for 45 minutes about the difference between meat and lettuce.

Right. But let me play devil's advocate for a second. We are talking about $45 here, 170 down to 125. Yeah. Losing 45 bucks doesn't sound like a catastrophe if it happens once. Why is this a crisis? Because you have to do the math on the volume. Okay. If you are a small practice, and that happens on dozens of claims a week, it is devastating. Dr. Wagner lost over $3,000 in six months just from this nickel and dime strategy.

$3,000. Yeah. And another doctor mentioned in that same report, a dermatologist named Dr. Sarah Jensen, she lost nearly $14,000 to Anthem. $14,000. Okay. That's a nurse's salary for a few months. That's new equipment. That's literally the difference between keeping the lights on or having to sell to private equity. Precisely. And remember, that is money they have already earned. They provided the care.

The insurance company just decided not to pay for it. It's theft by spreadsheet. It is a war of attrition. The insurance companies know that they steal a little bit from everyone constantly. It adds up to billions in profit. And because the individual amounts are so small, nobody fights it. And this really sets the stage for our mission in this deep dive. We are going to unpack the mechanics of this downcoding and these AI-driven denials.

We have to. It's the only way to understand what's happening. Right. We're going to look at the specific tools insurers are using names you might not know, like nH Predict and PXDX and the actual human cost of these algorithms. Because it is absolutely not just about the money. It's about patient safety. When you drain resources from primary care, patients suffer. Exactly. We aren't just going to leave you depressed about the state of health care today.

No, there is hope. We are also going to look at how technology is striking back. We're going to talk about a tool called ResolveRCM, which is basically designed to arm doctors in this fight. It turns what used to be a 45 minute administrative nightmare into a three minute automated victory. It's the David finding his slingshot. Or I don't know, maybe a laser guided slingshot. Definitely laser guided.

Right. So let's get into the trenches. Section one, the rise of the algorithmic Goliath. We define downcoding briefly, but help us understand the shift here because audits have always existed. Right. Insurance companies have always checked to make sure doctors aren't cheating. That seems fair. Yes. Fraud prevention is completely legitimate. Up coding billing for more than you actually did is a real problem and it should be stopped.

Right. But what has changed is the method and the scale. It used to be that if an insurer suspected a doctor of overbilling, a human auditor would request the medical records, read them, and make a determination. A human reading file, a novel concept. Right. But humans are slow, they get tired, and frankly, they are expensive to employ. So insurers like Cigna and UnitedHealthcare, they've all shifted to AI and predictive algorithms.

Okay. They don't look at the specific patient's chart anymore. They look at the doctor's patterns. What does that mean looking at patterns? Well, they compare Dr. Smith to every other doctor in the region. If Dr. Smith bills more level four visits than the average, the algorithm flags him as an outlier. Okay. So he's on a list. Exactly. And once you are flagged, the system automatically downcodes your claims to the average level.

But wait, what if Dr. Smith is just better? Or what if he specializes in sicker patients? I mean, if I'm the guy known for handling complex cases in my county, of course, my billing is going to be higher than the guy who just does flu shot. That's the problem. The algorithm doesn't care about nuance. It only cares about regression to the mean. Wow. It assumes that anyone deviating from the average is upcoding, basically inflating their bills.

So it automatically corrects them down to the average. It effectively punishes you for treating sicker people. That is incredibly cynical. It literally encourages doctors to just treat simple things and refer the hard stuff away, which is exactly what we are seeing happen across the industry. But I really want to talk about the scale of this, specifically the speed. You mentioned 1.2 seconds in the entry.

Yeah. That statistic really stuck with me from the source material. This was involving Cigna, right? Yes. This comes from a massive ProPublica investigation into Cigna PXDX system. That stands for procedure to diagnosis. Right. PXDX. The system basically checks if a procedure matches a pre-approved list of diagnoses. If it doesn't match perfectly, it denies it. But don't medical directors have to sign off on denials?

I thought legally a doctor had a review of claim to say no. Technically, yes. That is the law. Medical necessity must be determined by a medical professional. But Cigna found a workaround. Of course they did. The computer does all the work, flags the denial, and then batches these thousands of denials to a medical director, a doctor employed by Cigna, to just sign off on. ProPublica actually tracked the timestamps on these sign-offs.

And what did they find? These doctors were signing off on denials in an average of 1.2 seconds per patient. 1.2 seconds. I can't even find the approved button on my mouse in 1.2 seconds. I certainly can't open a PDF file. Exactly. No human is reading a patient file, checking medical necessity, and making a clinical judgment in 1.2 seconds. It is physically impossible. It's just a click. The AI flags it, and the human rubber stamps it.

We are talking about massive volume here. One single doctor was found to have denied 60,000 claims in a single month. 60,000 denials in a month. That's not a doctor. That's a denial factory. That's a human bot. It is. And the implication is clear. The review is a sham. The computer has already decided. This really brings us to the real world consequences because we aren't just talking about billing codes here.

No. We're talking about people's lives. This is where it gets heavy. We have to move from the abstract math of $45 down codes to the actual human cost. Because when you have a system built on deny first, pay later. That later sometimes never comes for the patient. No, it doesn't. And there's one story in the sources that really illustrates the stakes here. It's the story of John Cupp. Right.

This was in the ProPublica reporting about EviCore. Walk us through what happened to him. So John Cupp was a patient who needed a catheterization to check his heart. His arteries. Okay. This is a very standard procedure to see if there are blockages. His doctor, a trained cardiologist who knew his history intimately, requested prior authorization from his insurer, which was UnitedHealthcare.

Which basically means the doctor said, I need to do this test to keep this man safe. Correct. But UnitedHealthcare outsourced that decision to a third party vendor called EviCore. And EviCore's system denied the request. They said the test wasn't medically necessary. Did the doctor fight it? He did. He appealed it. He provided more information, explained the necessity, and EviCore denied it again.

They just ran out the clock. And what happened to John? He died of cardiac arrest months later. Oh man. That is absolutely devastating. It is. And what makes it even worse, if that's possible, is that ProPublica asked four independent cardiology experts to review Cup's medical file later after he had passed. And what did they say? Three of the four said the test was absolutely appropriate.

One even explicitly said it was certainly necessary and could have saved his life. So a computer algorithm or a rigid checklist used by a remote vendor effectively overruled a trained cardiologist and cost a man his life. That is the allegation. And the chilling part is, the system worked exactly as designed. Right. That's the horror of it. It wasn't a glitch or a mistake; the system is designed to create friction to delay high cost procedures.

In this case, the delay was fatal. And it's not just heart patients. There is this tool called nH Predict that sounds like an absolute nightmare for the elderly. It really is. This is an AI used by UnitedHealthcare and Humana via a company called NaviHealth. It basically predicts how long an elderly patient should need to stay in a nursing home or rehab facility after a hospital stay.

And being the operative word there exactly uses a database of millions of patients to say, okay, a 75 year old with a broken hip should be recovered in 14 days. And on day 14, the money stops regardless of whether that specific 75 year old can walk or even stand up regardless. The class action lawsuits filed against these companies alleged that the algorithm systematically overrides the actual treating doctor's orders.

But here's the most shocking part for me. What's that? When these decisions are actually appealed and reviewed by an administrative law judge or a third party, the algorithm is found to be wrong roughly 90% of the time. Wait, wait, stop. 90% error rate. If I had a calculator that was wrong 90% of the time, I would throw it in the trash. If I had a car that only started 10% of the time, I'd scrap it.

How is a medical tool allowed to be wrong 90% of the time? Because the error is in the insurer's favor. Even if they lose the appeal 90% of the time, they know that most people won't appeal. Most families are too stressed, too tired, or just too confused by the bureaucracy to fight. Yeah. So the insurer saves money on all the people who just give up and take grandma home early. It's a calculated bet on human exhaustion.

Exactly. And the employees at these companies, the case managers, were allegedly pressured to stick to the AI's prediction no matter what. The source material mentions that employees were disciplined if they authorized care that deviated more than 1% from the AI's prediction. So the human is just there to serve the machine. Do what the computer says or lose your job. Essentially, the computer says you are healed, so you are kicked out of the facility.

It is forcing families to pay completely out of pocket, depleting their life savings, or just taking their loved ones home before they are medically safe. And meanwhile, the doctors in the front lines are just drowning. Dr. Wagner from earlier, he said, it's exhausting. It is the death of private practice. If you are an independent doctor, you cannot afford to fight every single one of these $45 cuts or these endless prior authorization denials.

No. You don't have the staff. You don't have the time. So you have a choice. You either see more patients in less time, which we all know is bad for care, or you sell your practice to a massive hospital system or private equity firm, which just consolidates the market and usually drives up prices anyway. Exactly. The irony here is so thick. This technology was supposed to make health care more efficient.

We'll use AI to streamline billing. Right. Instead, it is actively driving good doctors out of the profession and making patients sicker. So why is this happening? I mean, besides corporate greed, which is the easy answer, let's look at the actual economics, section three. The system is rigged. We mentioned EviCore earlier. Who exactly are they? EviCore is what's known as a third-party enforcer or a benefits manager.

Insurers hire them specifically to handle prior authorizations. And their business model is fascinatingly perverse. How so? They pitch a three-to-one return on investment to the insurers. A three-to-one ROI. So for every dollar, the insurance company pays EviCore. EviCore saves them $3 in claims they don't have to pay out. That is their literal sales pitch. Denials for dollars, as ProPublica put it.

Wow. So their incentive is explicitly to deny care. It gets worse. In other cases, they have what are called risk contracts. What does that mean? This means EviCore gets a set budget to manage a population of patients. If they spend less on actual care than that budget, EviCore gets key the difference. That is a direct bounty on denials. Yes. If I deny this MRI, I keep the money that would have paid for it.

If I approve it, it comes right out of my bonus. Mm-hmm. Precisely. It creates a direct financial conflict of interest. You can't claim to be making decisions based strictly on medical necessity when your profit margin depends on finding things unnecessary. And the insurers know they can get away with it because of what we call the appeal trap. The appeal trap. This is the math that breaks the doctor's back.

Insurers know that less than 0.2% of denied claims are ever appealed. Less than 0.2%, that is virtually zero. Why is it so incredibly low? Because the friction is just too high. Think about Dr. Wagner losing that $45. To get that $45 back, his staff has to print records, write a formal letter of medical necessity, fax it, yes, they still fax it, and follow up on the phone. Ugh, faxing.

It takes about 35 minutes of staff time to prepare a single appeal packet manually. The fax machine is a strategic weapon in health care. It guarantees that data is hard to transmit. And if the staff member has paid, say, $30 an hour plus benefits, it literally costs more to fight the denial than the denial is actually worth. Exactly. You lose money by winning. It is a calculated apathy.

The insurers bank on the fact that doctors simply cannot afford the administrative fight. Wow. So they accept the loss. And that $45 multiplied by millions of claims becomes billions of dollars in profit for the insurers. It is a perfect mousetrap. The algorithm denies it instantly for free. The doctor has to spend an hour to fight it. Goliath wins every single time. Unless the rules change, or unless David gets a new weapon.

Well, let's talk about the rules first, section four, the legal and regulatory backlash. Because people are starting to notice this, right? The system is straining. They are. Yeah. There is significant movement, particularly in California. We really have to talk about the Physicians Make Decisions Act or SB 1120. I love the name of that bill. Very subtle. Hey, remember us. The physicians, we exist.

It is pretty direct. It was signed relatively recently and went into effect. Basically it says that an algorithm absolutely cannot be the final decision maker on medical necessity. Okay. A human licensee, an actual real doctor must review the denial. So no more 1.2 second reviews. That is the hope. It mandates that AI can assist in the process, but it cannot replace human clinical judgment.

And other states like Oklahoma and Texas are looking at similar legislative models. That's great. But that is state law. The federal level is a bit more complicated. Right. I saw something in the sources about the WISeR model. This sounded like a bit of a controversy involving CMS, the Centers for Medicare and Medicaid Services. It's a massive controversy. There's a very strong letter from the Federation of American Hospitals to Dr. Oz, the CMS administrator.

They are incredibly concerned about this WISeR model. What is WISeR supposed to do? It stands for wasteful and inappropriate service reduction. The idea is to use technology companies to review claims in traditional Medicare, not just Medicare advantage, to cut costs, bringing the prior authorization headache to regular Medicare. That is the big fear. The hospitals are arguing that this model incentivizes vendors to deny care, exactly like we saw with EviCore.

They are worried it will replicate the absolute worst parts of Medicare advantage, where access to care is constantly blocked by algorithms. The letter explicitly asks CMS to abandon the program entirely, or at least delay it because it relies on these opaque AI systems. And then there are the class action lawsuits. Yes. The lawsuits against Humana and UnitedHealthcare are huge. They allege that using nH Predict to override medical necessity is a fundamental breach of contract and fiduciary duty.

Fiduciary duty basically means you have a legal obligation to act in my best interest. Right. If I pay you premiums for insurance, you have a duty to cover my valid medical needs. If you use a rigged algorithm to systematically deny me care just to save yourself money, you are violating that core duty. So the lawyers are involved, the regulators are involved, but lawsuits take years.

Legislation takes forever. Doctors are bleeding money today. Correct. They need a solution right now. They need a tourniquet. And that brings us to section five, the counter strike. If the problem is AI speed versus human slowness, the solution seems to be AI versus AI. Exactly. Enter ResolveRCM. So tell us about ResolveRCM. Is this just another piece of bloated software that makes doctors hate their computers even more?

Huh. No, this is actually designed to sit completely outside the clunky EHR systems. It's a browser based tool. Okay. The core promise is simple. It takes that 45 minute nightmare of creating a manual appeal packet and cuts it down to three or four minutes from 45 minutes down to three minutes. That changes the math entirely. It reverses the economics. Mm hmm. Remember, the insurer wins because it costs too much for the doctor to fight.

If it only takes three minutes, suddenly becomes profitable to fight for that $45. How does it work? Walk us through the workflow. Imagine I'm a billing specialist. I just got a denial from Aetna on my screen. What do I do? It's surprisingly straightforward. Yeah. Step one, the user inputs the denial code. So let's say medical necessity not established and pastes in the relevant chart notes from the doctor.

Okay. Just copy and paste. Step two is the AI processing. But the tool doesn't just use some generic template. It actively analyzes the clinical notes to extract the specific evidence needed to refute that exact denial. Wow. It reads the note like a highly trained medical auditor. It finds the chronic kidney disease mentioned, the prescription management details, the review of MRI results that the insurance algorithm completely ignored.

So it reads the chart better than the insurance company did. It speaks Goliath language. Much better. And step three is the really cool part. It generates what they call a medical decision making sheet, an MDM. What is that? MDM is the standard rubric used to determine billing levels. It's based on AMA guidelines, things like risk of complications, amount of data reviewed, overall complexity of the problem.

Right. ResolveRCM's AI actually builds an argument. It says this was a high complexity visit because the patient had these three chronic conditions and we reviewed these specific MRI results. So it's building the legal and medical argument for the doctor automatically. Yes. And finally, step four. It generates the full packet, a professional appeal letter, the MDM summary and a checklist of attachments needed all in a few minutes.

And this is defensible, right? That's a word they use a lot in the materials. That is key. It's not about tricking the insurer. It's about providing the exact documentation the insurer's algorithm is looking for, which humans often forget to include just due to fatigue. Yeah. Humans get tired. The AI doesn't get tired. It ensures the packet is airtight and complete. I noticed they emphasize it's EHR agnostic and HIPAA-conscious.

Why does that matter for a small practice? EHR agnostic means you don't have to spend six months trying to integrate it with your hospital's legacy software. It just runs in the browser. Huge time saver. And HIPAA-conscious means the patient health info stays strictly in the local browser storage. It's not being sent to a public cloud in a way that risks privacy breaches. It's a very clever design to completely bypass the hospital IT bureaucracy.

And the pricing models, I saw something like $349 a month for the pro plan for a medical practice that is absolute peanuts if it recovers even a few claims. Exactly. If you recover just three or four of those $125 down codes, the tool literally pays for itself. Everything after that is pure profit reclaimed from the insurer. This feels like a massive strategic shift. It's not just about efficiency.

It's about what they call flooding the zone. That is the exact phrase. If doctors start appealing absolutely everything using ResolveRCM, the insurers 0.2% appeal rate calculation completely breaks down. Suddenly they're flooded with tens of thousands of legitimate, highly documented appeals. Because now it's cheap enough for the doctors to do so. Right. The algorithm no longer guarantees free money for the insurer.

It forces the insurance company to actually use human labor to review the claims, which costs the money. It destroys the core profitability of the automatic denial. It levels the playing field. David doesn't just have a slingshot anymore. He has a machine gun of documentation. Huh, a documentation machine gun. I like that. But yes, it forces the insurer to spend their own money to process the appeal.

So looking ahead to the big picture, section six, the future of the battle. This is just going to be robots fighting robots forever in health care. We are definitely entering an AI arms race, payer AI versus provider AI. The insurers will inevitably get smarter algorithms. They will probably use generative AI to read AI-written appeals. Robot lawyers arguing with robot auditors, great. Exactly.

But the only way to really solve this beyond just fighting better technically is transparency. The MGMA, the medical group management association, has some really interesting advice on this. They advise practices to track the data. If a payer is downcoding systematically, use that data. Don't just fight the individual claim. Fight the overall pattern. Take that aggregated data straight to the state insurance commissioner.

Right. You can't just go the regulator and say, it feels like they're cheating. You have to show them the spreadsheet. Exactly. Doctors want to know the rules of the game. If there is an algorithm denying care, show us the logic. Don't just say, computer says no, transparency is the absolute only way to deescalate this war. But until then, tools like ResolveRCM seem like the only viable defense for private practice.

They really are. In the age of algorithms, flawless documentation is your best defense. And if you can automate that documentation, you finally have a fighting chance. So let's wrap this up. We've covered a lot of ground today. The Goliath here is massive. These automated denial machines like nH Predict and EviCore that are clearly putting profits way over patients. And the David is fighting back with regulatory pushback and these incredible new tools like ResolveRCM that empower doctors to fight back efficiently.

I want to leave you the listener with a final thought, a provocative one. We've talked a lot about AI denying care. We've talked about AI writing appeals to get that care back. It raises a terrifying question. If the AI is making the denial and the AI is writing the appeal, who is actually looking at the patient? That is the scary part. We are abstracting the human entirely out of healthcare.

My hope and it's an optimistic one is that by automating this bureaucratic war, by letting ResolveRCM handle the paperwork fight, we can free up the human doctors to actually talk to human patients again. That's the dream. ResolveRCM doesn't replace the doctor. It protects the doctor's time so they can focus on the patient in front of them. Exactly. That is the best case scenario we should all be rooting for.

So call to action time. If you are a provider listening to this deep dive, stop accepting the guilty until proven innocent model. Explore tools like ResolveRCM. Fight back because your patients desperately need you to win this one. Documentation is your best defense. Don't bring a fax machine to an AI fight. Well said. Thanks for diving deep with us today. This is a story that is going to keep evolving and we'll definitely be watching it.

Stay curious, stay informed, and we'll catch you on the next one.

Sources

This draft tracks the source set you provided. Where a journal article is not yet live on the journal site, the accessible preprint or summary link is listed instead.

- NBC News story mirror on downcoding: Inside the fight between doctors and insurance companies over downcoding

- ProPublica: “Denials for Dollars” and EviCore's role in outsourced utilization review

- ACDIS / HealthLeaders: coverage of the Cigna PXDX lawsuit and reported batch-denial volume

- AMA: physicians concerned AI increases prior authorization denials

- MGMA: combatting payer downcoding in the age of AI

- Federation of American Hospitals: WISeR opposition comments and denial-risk concerns

- Dana G. Jones, “Artificial Intelligence Can Kill You” (accessible SSRN paper; forthcoming in The Elder Law Journal)

- Word & Brown: California law prohibits using AI as basis for claims denial

- ClassAction.org: Humana nH Predict allegations in post-acute care litigation

- Karen Zupko & Associates: The Rise of Automated Downcoding

- Counterforce Health: 2025 guide to fighting AI-driven insurance denials

- DrCatalyst: automatic downcoding threatens independent physician practices